A practical look at where AI moderation helps and where human moderation still matters.

AI is starting to move into one of the most human parts of research: the interview itself. As adoption grows, the conversation is shifting toward a more practical question about where AI moderation works well, and where it falls short.

For clarity, the comparison here is between traditional qualitative led by a live human moderator – either in person or virtually- and AI-led interviews that, at least for now, are primarily text- or voice-based. This discussion focuses on one-on-one interviews, where most AI moderation is currently being applied. The pitch is easy to understand.

AI moderators can run many interviews at once, stay close to a guide, and return transcripts and summaries quickly. For structured projects, that can be genuinely useful.

But the real decision is about fit.

The question that matters is not whether AI-moderated interviews work at all, they do, but where they add value, where they flatten the conversation, and where human moderation is still critical.

What the evidence suggests so far

Early evidence points to a more nuanced reality than either advocates or skeptics often assume.

Conversational systems can draw out richer open-ended answers than static surveys, especially when they can ask clarifying follow-ups in the moment. In practice, this means AI can move beyond simple data collection and begin to approximate elements of qualitative depth, particularly in structured settings.

A range of studies have found higher engagement and more detailed responses in conversational formats compared to standard online surveys. At the same time, there can be trade-offs, including slightly lower respondent experience in some cases.

Just as important, people often seem more willing to open up to AI than many expect. Prior research shows that levels of self-disclosures can be similar whether respondents believe they are talking to a chatbot or to a person. That challenges a common assumption that human moderators are always necessary to build openness and trust.

Interview mode matters too. Text can feel more private and lower pressure, while voice can feel more natural and can produce longer, more layered answers. The implication is not that one is better, but that each shapes the type of data collected.

Some research suggests text-based interviews can encourage greater disclosure of sensitive information, while voice-based approaches may generate richer, more expansive responses. Both can be true at once; voice may yield richer stories, text may feel safer.

Where AI-moderated interviews are a strong fit

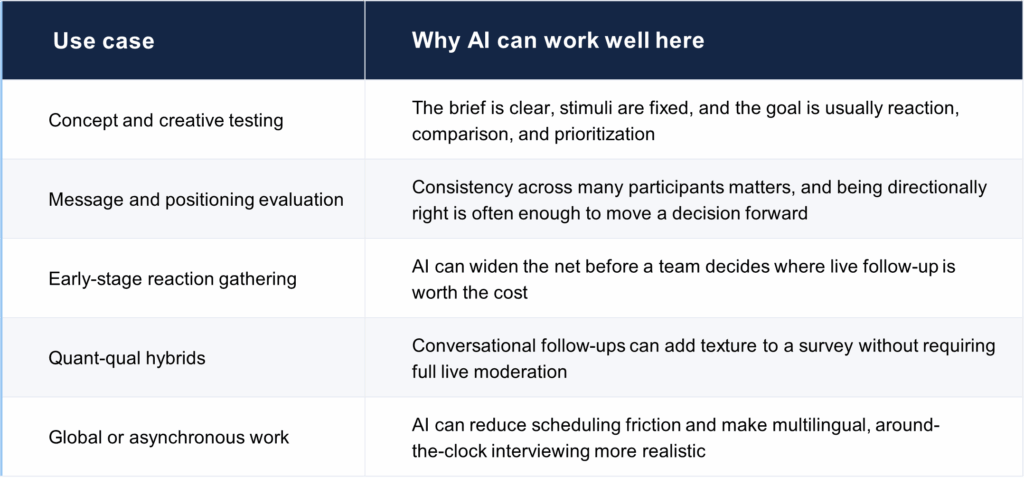

AI moderation is strongest when the job is structured, stimulus-led, and more directional rather than deeply interpretive. In those settings, consistency is an advantage rather than a limitation. Recent industry synthesis around AI moderation points to the same cluster of good-fit use cases: concept testing, message evaluation, structured stimulus reaction, high-scale qualitative validation, and pre-work before live interviews.

In these kinds of studies, the value is not that AI is more insightful than people. The value is that it can make qualitative-style listening available more quickly in projects that otherwise would have been done with lighter methods – or not done at all.

Where the limitations show up

Probing is still a craft

Human moderation still matters because probing isn’t just a feature; it is a skill. Good moderators know when to clarify, when to stay silent, when to challenge, and when a seemingly minor comment is actually the most important thread in the interview. Probing is central to eliciting rich, deep data and while AI can probe (sometimes surprisingly well), it can also fall back on generic prompts, miss the emotional center of a response, or move on too early.

Context and continuity

Human moderators build continuity across the conversation. They remember what someone said ten minutes earlier, notice contradictions, and connect separate moments into a bigger story. AI systems are improving, but many interviews still feel more like a series of competent turns than a truly cumulative conversation.

Emotional nuance

The limitations of AI-moderation is most evident when the topic is emotionally loaded, identity-driven, culturally specific, or strategically high-stakes. In those moments, the moderator is not just collecting words. They are reading hesitation, adjusting tone, noticing what a participant avoids, and deciding whether to push, pause, or reframe. That judgment remains hard to automate well.

AI moderation still has limited ability to interpret non-verbal cues. Body language, facial expressions, and subtle physical reactions often provide important context in qualitative interviews. Small signals like a pause before answering, a change in posture, or a facial expression can indicate discomfort, uncertainty, or unarticulated meaning. These cues often guide how a moderator responds in the moment and can often determine how far to push or where to go next. Without them, AI is working with a narrower view of the participant experience.

Trust, privacy, and uneven adoption

One important consideration: willingness to engage with AI isn’t evenly distributed. Recent research found that privacy concerns and preference for human interaction still act as barriers, and that adoption differs across demographic and regional groups. So, even if AI moderation works methodologically, it won’t feel equally acceptable (or comfortable) to every audience.

Exploratory discussion is still a human strength

Human moderation still matters when the conversation is meant to define the problem, not just respond to it. In early-stage stakeholder discussions and exploratory interviews, the goal is often to surface ambiguity, identify what is not yet understood, and follow emerging threads that help shape the business challenge itself. That requires judgment about when to pivot, when to reframe, and when an offhand comment signals something more important underneath. AI can work well when the objective and path are clearly defined, but it is less reliable when the conversation itself is part of figuring out what the real question should be.

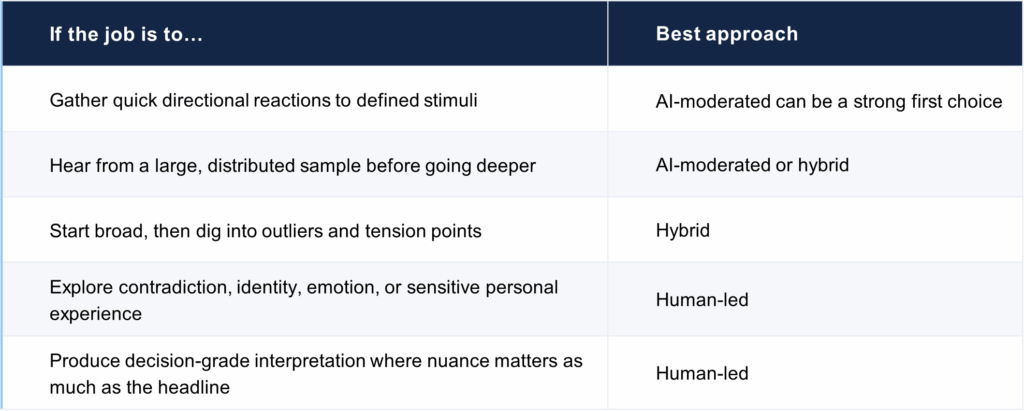

A practical decision rule

This is not really an AI-versus-human decision. It’s a fit question: what kind of conversation does the project require, and how much interpretation do you need?

Where to land

The teams that will get the most from AI moderation will be the ones that don’t ask it to do everything. They will use it where structure, scale, and speed matter, and keep humans close to design, QA, interpretation, and the moments where ambiguity starts to matter most.

Ready to explore where this fits?

Contact us to explore how AI-moderated and human-moderated approaches can work together for your next study – where AI can help, where human moderation should stay in the lead, and where a hybrid design is likely to give you the strongest answer.

About KS&R

KS&R is a nationally recognized strategic consultancy and marketing research firm that provides clients with timely, fact-based insights and actionable solutions through industry-centered expertise. Specializing in Technology, Business Services, Telecom, Entertainment & Recreation, Healthcare, Retail & E-Commerce, and Transportation & Logistics verticals, KS&R empowers companies globally to make smarter business decisions. For more information, please visit www.ksrinc.com.